Security is a critical aspect of software development. But that criticality doesn’t stop it getting overlooked.

Beyond the basics, most developers aren’t taught anything about building secure codebases, and in the rush to meet deadlines or launch new features making sure there are no security issues with your code is far down the priority list. A lot of teams effectively choose security through obscurity as their design principle.

This can be fatal for a product. It only takes one mistake, one breach, or one attack to bring down your application or even your company.

But the attack surface for security exploits is large, so how can you possibly catch everything? That’s where the OWASP Top 10 list of security vulnerabilities comes in. OWASP, the Open Web Application Security Project, has laid the foundation for understanding online security issues. Their Top 10 is a list of the most common vulnerabilities they see on the modern web.

Let’s go through this list, looking at examples of each, along with ways both engineers can fix these vulnerabilities in their codebases, and how engineering managers can help their teams identify these issues by giving them a better understanding of their codebases.

The OWASP Top 10 security problems with your codebase, and how engineers can fix them

1. Broken Access Control

Broken access control is a security vulnerability where an application fails to properly enforce the policy that restricts users to their intended permissions. This can result in unauthorized disclosure, modification, or destruction of data, or actions performed outside the user's privileges.

This could be due to not adhering to the principle of least privilege, insecure object references, missing access controls or CORS misconfiguration, along with a ton of other issues.

Let's take the example of a commonly used web server like Apache. If you’re using Apache, it comes with a default configuration in Apache's `httpd.conf` file is Directory Listing that can be insecure. By default, for some distributions, when you access a directory on the Apache server that doesn't have an index file (like index.html or index.php), it shows all the files in that directory. This is because the configuration for that option, Indexes, is enabled by default.

The Indexes option is what enables directory listing. If an attacker knows or guesses the directory structure, they could potentially access sensitive data. You should use the -Indexes option to disable directory listing, and prevent unauthorized access to files.

2. Cryptographic Failures

Inadequate encryption can result from using outdated or weak encryption algorithms, improperly managing encryption keys, or not using encryption where it's needed. This could expose sensitive data like user passwords, personal information, or confidential business data, to attackers.

Encrypting data at rest and in transit is a crucial aspect of data security, and it's vital in maintaining users' trust and complying with data protection regulations.

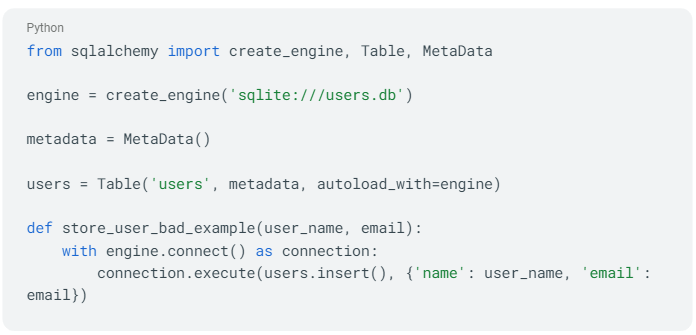

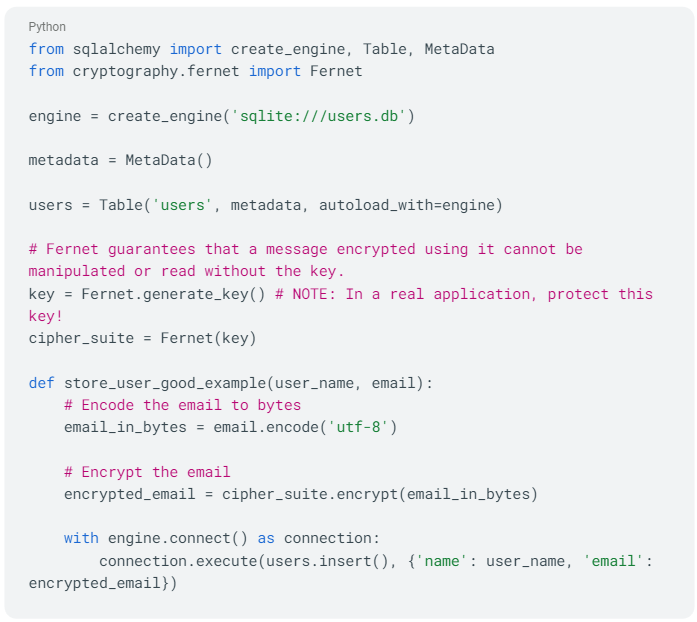

Here we’ll use the example of saving personal information (like a user's email) to a database. In Python, we might use an ORM like SQLAlchemy to interact with our database. Don’t do this:

Here, the user's email address is stored directly in the database without any encryption. If the database is compromised, the attacker would have access to all the emails.

The Fernet class from the cryptography library provides an easy to use encryption for our data. Now let's revise this to include encryption using the `Fernet` class:

In the second version, before storing the email address in the database, we encrypt it using Fernet symmetric encryption. The email is first encoded to bytes (since Fernet operates on bytes), then encrypted, and finally stored in the database. This ensures that even if the data is compromised, the email addresses remain safe.

3. Injection

Not validating/sanitizing your inputs is a common mistake where a developer neglects to properly check or cleanse data provided by a user or an external system before using it in their program.

- Input validation ensures that the program receives the type of data it expects, in the format it expects.

- Input sanitization involves removing or altering potentially harmful data to prevent these types of attacks.

If a program blindly trusts inputs, it could be vulnerable to a variety of attacks. For example, a malicious user could provide input that includes code intended to exploit the system, such as SQL Injection or Cross-Site Scripting (XSS) attacks.

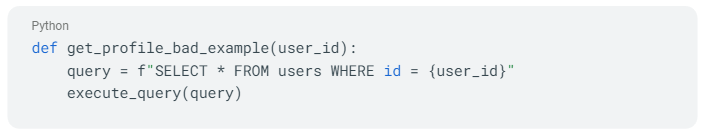

Suppose you have a function in a web application that takes a user input to query a database for a specific user profile:

This code is dangerous because it directly incorporates user input into the SQL query. A malicious user could potentially provide a user_id like 1 or 1=1 which would make the SQL query SELECT * FROM users WHERE id = 1 or 1=1, effectively returning all users.

Just like Bobby Tables:

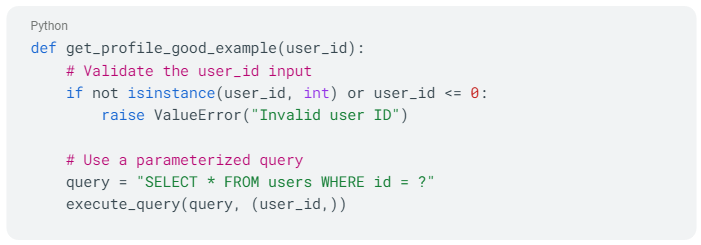

Now, let's see how to mitigate this with input validation and query parameterization:

This code mitigates the issue by:

- Validating the input: It checks that the `user_id` is an integer and is greater than 0. If the input is not valid, it raises an error.

- Using a parameterized query: It separates the data (user_id) from the command (SQL query), which ensures that the user_id cannot change the structure of the SQL query.

While this example is quite simple, it illustrates the fundamental principle of validating and sanitizing inputs.

4. Insecure Design

Insecure design encompasses various weaknesses resulting from missing or ineffective control design. It is distinct from insecure implementation, as design flaws and implementation defects have different causes and remediation processes.

Even with a secure design, implementation defects can still lead to vulnerabilities that may be exploited. Insecure design cannot be resolved solely through perfect implementation because necessary security controls were never created to defend against specific attacks.

Some of the things you can do to prevent insecure design are fostering a culture and methodology of secure design that constantly evaluates threats and robustly designs and tests code to prevent known attack methods, integrate threat modeling, and write unit and integration tests to validate resilience against the threat model.

5. Security Misconfiguration

Security misconfiguration can be something as simple as having the default passwords for systems still enabled, or as complex as setting the correct security headers and directives on API responses.

But an increasingly common security concern is committing private keys to a repository, either public or private.

This exposes systems and resources protected by those keys, allowing attackers to impersonate legitimate users, gain unauthorized access, and potentially compromise sensitive data or control critical infrastructure. GitHub’s secret scanning helps mitigate this, but only after the keys have been committed.

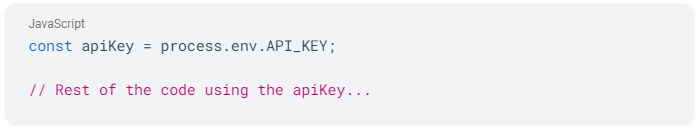

To mitigate this before pushing code, it is crucial to separate the private key from the code and avoid committing it directly.

The private API key here is fetched from an environment variable (`API_KEY`). By using environment variables, you can keep the private key separate from the codebase.

By adopting this approach, you can avoid committing private keys to version control and protect sensitive information from public exposure.

6. Vulnerable and Outdated Components

Continuing to use older versions of libraries that your product depends on is a huge security risk. These older versions may contain known vulnerabilities that have been fixed in more recent versions. Attackers often exploit these known vulnerabilities to compromise systems. An example that had significant consequences is the Heartbleed Bug.

The Heartbleed Bug was a serious vulnerability in the popular OpenSSL cryptographic software library, used to implement SSL/TLS protocols and encrypt communication on the internet. This weakness allowed attackers to steal protected information by reading the memory of systems protected by the vulnerable versions of the OpenSSL software.

Additionally, older libraries and frameworks may no longer be supported by their developers, meaning that new vulnerabilities discovered in these components may not get patched.

7. Identification and Authentication Failures

When a user logs into a web application, a session is created to maintain the user's state and track their interactions. The session is usually associated with a unique session ID, which should be securely generated and managed.

Insecure handling of session management could include:

- failing to protect the session ID from interception (e.g., by not using HTTPS)

- not regenerating the session ID after login or after a certain time period

- not properly invalidating the session after logout.

Such mistakes can expose the application to session hijacking, where an attacker can steal or predict the session ID and impersonate the user, potentially gaining access to sensitive data or functionality. Therefore, secure session management is crucial for maintaining the security and integrity of web applications.

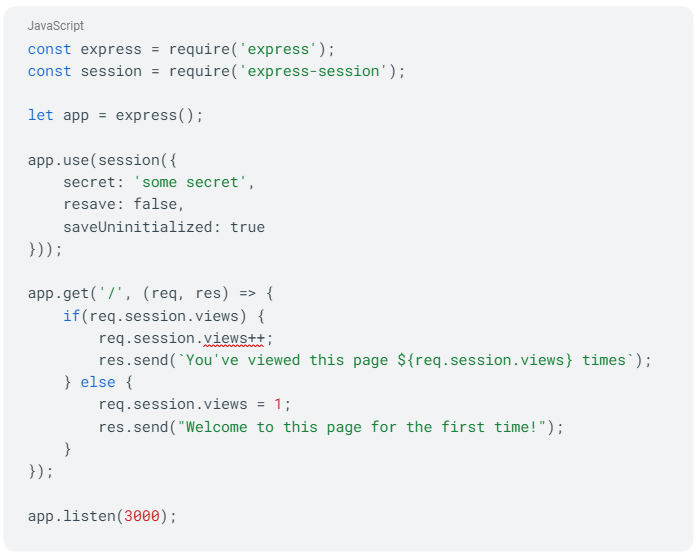

Here's an example of session management that's missing important security features:

In the above code, we're using the express-session middleware for session handling, but it's not fully secured. The session cookie is not set to be httpOnly (to prevent access from JavaScript and mitigate XSS attacks) and secure (to ensure the cookie is only sent over HTTPS).

Additionally, the session ID is not being regenerated after login, which leaves the session susceptible to session fixation attacks.

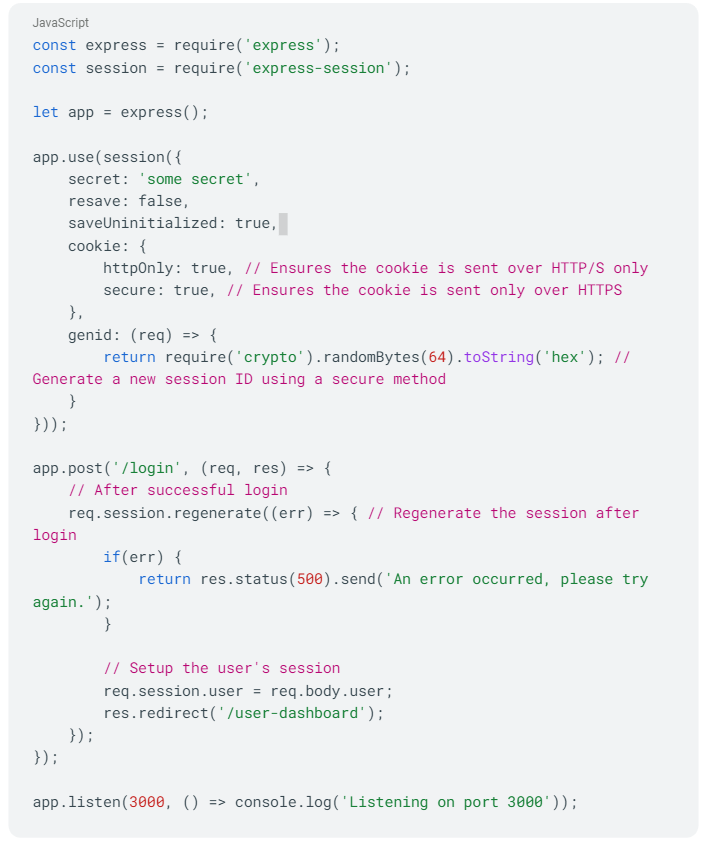

Now, let's revise this to use better security practices:

Here, we're setting the httpOnly and secure options for the session cookie, which protects it from XSS attacks and ensures it's only sent over HTTPS. We're also generating the session ID using a secure method. After the user logs in, we regenerate the session to prevent session fixation attacks. These changes make the session management of the application significantly more secure.

8. Software and Data Integrity Failures

Software and data integrity failures occur when code and infrastructure lack measures to protect against integrity violations. For instance, relying on plugins, libraries, or modules from untrusted sources, repositories, and content delivery networks (CDNs) can compromise integrity and insecure CI/CD pipelines pose risks of unauthorized access, malicious code injection, or system compromise.

To prevent these failures you want to use digital signatures to verify software, ensure libraries and dependencies consume trusted repositories, and implement a code review process for code and configuration changes to minimize the risk of introducing malicious code or configuration into the software pipeline.

9. Security Logging and Monitoring Failures

Security Logging and Monitoring Failure is inadequate implementation of logging resulting in a failure to effectively detect, respond to, and investigate security incidents. This might be through lack of real-time monitoring, inadequate log retention, or failure to conduct audits and reviews.

At its most basic it might be insufficient log coverage, with critical security events and activities not being logged, leading to a lack of visibility into potential security threats or suspicious activities. This could include neglecting to log failed login attempts, privilege escalations, or unauthorized access attempts.

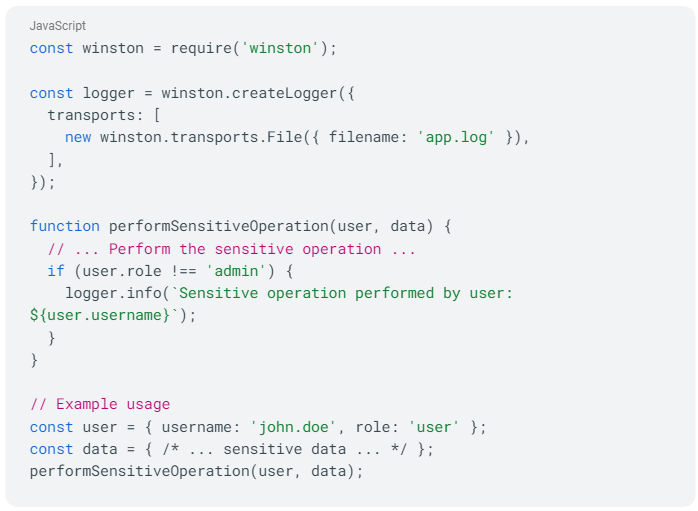

Here's an example illustrating insufficient log coverage:

In this code, a sensitive operation is being performed, and the intention is to log details when the operation is performed by a non-admin user. However, there's insufficient log coverage because the log statement is only triggered for non-admin users. If the operation is performed by an admin user or an attacker with admin privileges, no log entry is generated.

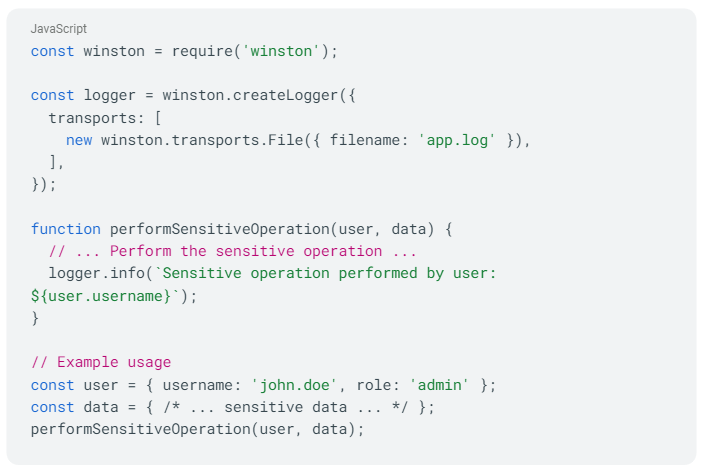

To improve log coverage and ensure comprehensive logging, you should log the occurrence of sensitive operations regardless of the user's role. Here's an updated example with improved log coverage:

In this revised code, the log statement is triggered for all sensitive operations, regardless of the user's role. This ensures that all occurrences of the sensitive operation are properly logged, providing better log coverage for monitoring and detecting potential security incidents.

10. Server Side Request Forgery (SSRF)

Server-Side Request Forgery (SSRF) is a web security vulnerability where an attacker can trick a server into making unintended requests on their behalf.

In an SSRF attack, the attacker typically crafts a request that specifies a target URL or IP address within the server's network or other restricted zones inaccessible from the external network. The server, unaware of the malicious intent, fetches the specified resource, which could include internal files, local services, or even the server itself.

This allows the attacker to exploit the server's trust in its own network and potentially gain unauthorized access to sensitive information or conduct further attacks.

SSRF attacks can have severe consequences, such as bypassing firewalls, compromising internal systems, performing port scanning, or exploiting misconfigured services. Attackers may use SSRF to exfiltrate data, launch attacks against internal infrastructure, or pivot to other parts of the target network.

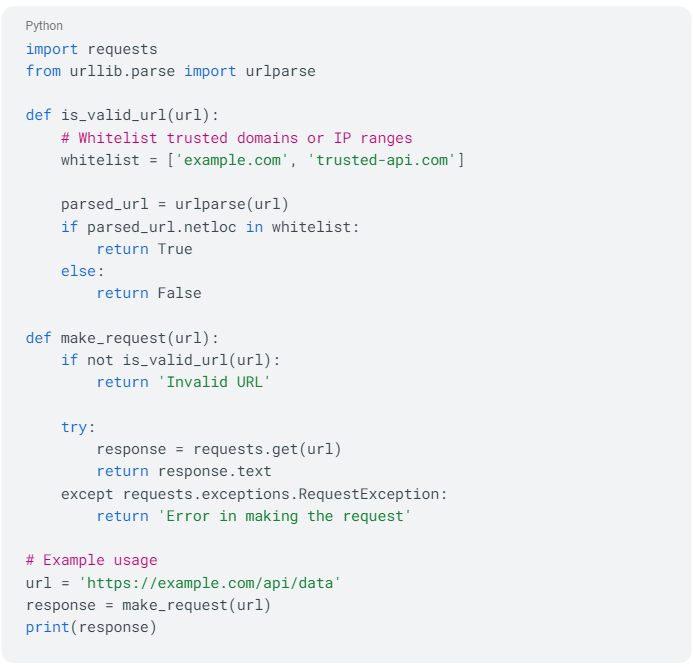

You can prevent this through input validation and whitelisting. You validate and sanitize user-supplied input, especially URLs or IP addresses, to prevent the injection of malicious payloads or bypassing of filters.

In this code, the is_valid_url function checks whether a given URL is from a trusted domain or IP range defined in the whitelist. If the URL passes the validation, the make_request function proceeds with making the HTTP request using the `requests` library.

By employing input validation and whitelisting, we ensure that requests are only made to trusted domains or IP ranges. This helps mitigate the risk of SSRF attacks by preventing unauthorized requests to internal or restricted resources.

In real-world scenarios, the validation and whitelisting mechanisms may be more complex, taking into account additional factors such as URL schemes, ports, or specific endpoints and the whitelist needs to be regularly reviewed and updated to reflect trusted domains or IP ranges.

How engineering managers can help their team fix codebase vulnerabilities

It’s worryingly common for this problem to basically fall on the shoulders of frontline developers. With no guidance, they won’t know where their mistakes are. And with a neverending range of possible attacks, developers can’t possibly get it right every time without support.

This is where visualizing your codebase and automating security within it can help. If engineering managers can set up their processes to constantly highlight security threats, then it makes it much easier for developers to notice issues, and it spreads the important burden of security to the entire organization.

Here are three things engineering manages can give their team to help with security.

A map to their codebase

Visualizing a codebase through a code visualization tool like CodeSee can provide numerous benefits when it comes to understanding the security of that codebase:

- Identify Potential Weak Points: Visualization tools can highlight areas in the codebase where potential vulnerabilities may exist. For instance, they can point to spots with high code complexity, which are often prone to errors due to the difficulty of maintaining and debugging them. Visual representations can also help identify insecure coding practices or deprecated functions that need attention.

- Better Understanding of Code Flow: Code comprehension tools can provide an overview of the code's flow and architecture, making it easier to understand how data moves through the application. By understanding the data flow, you can better identify potential security risks like areas where sensitive data might be exposed or where input isn't being properly sanitized, opening the potential for injection attacks.

- Highlighting Dependencies: Visualizations can make it easier to see dependencies between different parts of the codebase. This is helpful for identifying security risks because if a vulnerability is found in one part of the codebase, any dependent parts are potentially compromised as well.

- Visualizing Changes Over Time**: Some tools enable the visualization of code changes over time, which can help identify when and where new potential security risks were introduced. This can be useful for version control and tracking down when a vulnerability first appeared.

- Ease of Communication: Visual representations can serve as a powerful tool for communicating about the codebase's security status among diverse team members. Non-technical stakeholders may find it easier to understand security issues through visual representation rather than through lines of code or written reports.

- Promoting Code Reviews and Collaboration: By visualizing the codebase, team members can easily participate in code reviews and spot potential security issues. This is particularly helpful in a team where some members might be more familiar with the codebase than others.

WIth CodeSee, you can quickly see areas of complexity in your code that might be susceptible to security issues. Using interactive code tours, you can then highlight these areas for your team as code that needs to be refactored and secured:

If we take a look at the OWASP list above, both Vulnerable and Outdated Components and Insecure Design are prime examples were having a visual representation of your code, and being able to see the components in use, can have a positive impact on the security of the codebase.

Visualization tools don’t replace static code analysis or pen testing, but they are great to use as part of a comprehensive, multi-layered approach to securing your code.

Checklists. Checklists. Checklists.

In The Checklist Manifesto: How to Get Things Right, Atul Gawande, a surgeon and public health researcher, laid out the incredible importance of checklists for improving medical outcomes.

Gawande argued that medicine has become increasingly complex, with a multitude of possible diagnoses, treatments, and procedures for any given patient. This complexity has made it increasingly difficult for any one individual to remember and properly apply every necessary step in patient care. As a result, errors can and do occur, which can lead to negative outcomes for patients.

Inspired by checklists used in aviation and other industries where errors can have catastrophic results, Gawande and his team developed a surgical safety checklist in collaboration with the World Health Organization. The checklist included simple but crucial steps to be taken at three points in any surgical procedure: before anesthesia is administered, before the incision is made, and before the patient is taken out of the operating room.

The use of the checklist resulted in a significant reduction in complications and deaths associated with surgery in hospitals where it was implemented. The simplicity of the tool belies its profound impact: by providing a standard procedure to be followed in every case, the checklist helps to catch potential errors before they occur, ensuring a higher level of care for every patient.

Does all this sound familiar? Codebases have become increasingly complex. This complexity means individual developers can’t remember every step to secure the codebase. So errors occur.

Checklists can play an important role in improving codebase security in multiple ways:

- Ensuring Consistency: A well-designed checklist ensures that security checks are consistently carried out every time changes are made to the codebase. This reduces the likelihood of a security check being skipped or forgotten.

- Promoting Best Practices: A checklist can help enforce good security practices and encourage developers to always consider security implications when writing code. This could include checks for secure coding practices, such as proper input validation and output encoding, the principle of least privilege, and the use of secure libraries and frameworks.

- Assisting Code Reviews: A security checklist can be used during code reviews to ensure that all necessary security checks have been conducted. It provides a structure for the reviewer, ensuring that security considerations are systematically addressed.

- Increasing Awareness: By using a checklist, you ensure that security is a topic that is always considered, increasing the awareness of the team members to potential security issues and the need for secure development practices.

- Documenting Security Efforts: Checklists also serve to document what security measures have been taken, which can be important for audits, meeting regulatory requirements, or for discussions with customers or partners concerned about security.

- Onboarding New Team Members: For new team members or those less familiar with security practices, a checklist can serve as an educational tool and a guide to the security expectations within the project.

- Mitigating Human Error: Checklists help mitigate human error by providing a written guide that can be followed step by step. This reduces the risk of a step being missed due to forgetfulness or misunderstanding.

A well-thought-out security checklist is a valuable tool for enhancing the security of a codebase. It helps ensure that essential security considerations are not overlooked, encourages consistent application of secure coding practices, and serves as a valuable reference and documentation tool.

With CodeSee’s code automations, you can automatically add security checklists to your PRs. Almost all the vulnerabilities on the OWASP list can be mitigated with a checklist to make sure the developer hasn’t missed anything (or has added something they shouldn't). But a great example would be Security Misconfiguration. If code is pushed with sensitive information, a checklist can be added to the PR to push the engineer to quickly fix the problem:

- Condition: If new code, contains, sk_

- Action:

- Add to checklist: You’ve committed the private Stripe key to the repo. This is BAD.

- Add to checklist: Remove the private key and contact the security team. This key needs to be rotated.

Use AI as a security reviewer

Security is a “shallow but very wide” problem which makes it ideal for AI tools. It’s shallow in that the fixes are often trivial (if you look at the code fixes above, most are clear and obvious), but its wide because of the sheer magnitude of them. AI tools have the capacity to go through all the thousands of possible security issues within a codebase quickly, and then come up with a solution.

Engineers can use CodeSee’s code understanding to understand the security issues with their code. For instance, asking AI for any potential SOC2 violations. You can give it a few examples of what’s good or bad, and it will scan the entire codebase looking for non-compliant code.

Make security a priority

Safeguarding your codebase is not a task to be taken lightly. Neglecting security measures, whether due to lack of awareness or competing priorities, can have severe consequences for your application and your organization as a whole.

The OWASP Top 10 list serves as a valuable resource, offering insight into the most common vulnerabilities that plague the modern web. By familiarizing ourselves with these vulnerabilities and understanding how they can manifest in our codebases, we empower both engineers and engineering managers to proactively address potential security risks.

Visualizing and automating security in your codebase is a powerful approach to mitigating vulnerabilities. Through effective code review processes, automated tools, and continuous education, we can create a culture of security-conscious development. By doing so, we not only protect our applications and users from potential attacks but also foster a sense of trust and reliability in our products.

Remember, it only takes one mistake, one breach, or one attack to disrupt your entire operation. Embracing security as a fundamental aspect of software development is an investment in the longevity and success of your organization. By prioritizing security, understanding the common vulnerabilities, and equipping our teams with the knowledge and tools to detect and address these issues, we can confidently navigate the ever-evolving landscape of cybersecurity.